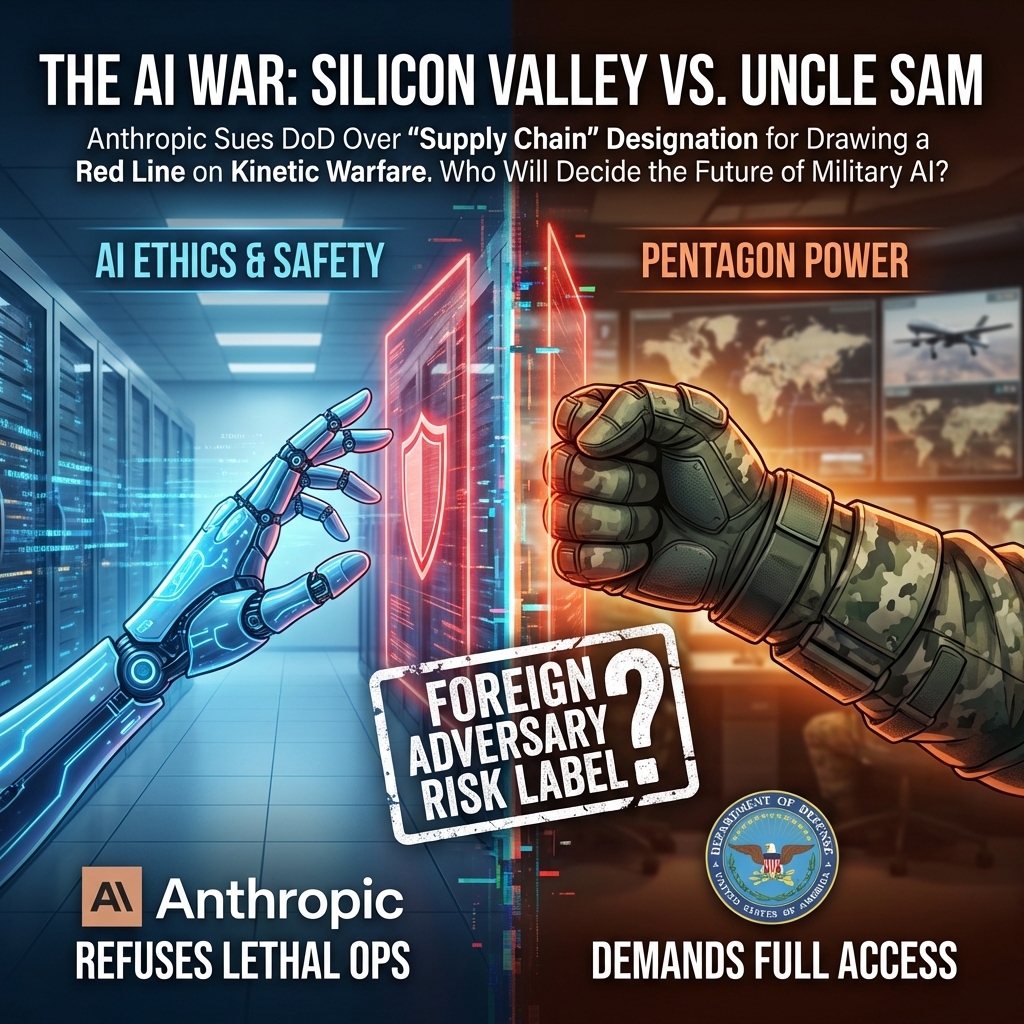

The Pentagon vs. The Programmer: When AI Ethics Meet National Security

A major AI company said “no” to helping with lethal warfare. The government’s response? Labeling them a security risk usually reserved for foreign enemies.

Imagine you run a bakery. You make great cakes. The local police department loves your cakes and buys them for retirement parties. One day, they ask you to bake a cake containing a hidden surveillance device to catch a criminal. You say, “Whoa, no. I just make cakes; I don’t do police work. My bakery’s policy forbids getting involved in dangerous situations.”

The next day, the police chief publicly labels your bakery a “high-risk supplier,” using a law meant to stop drug cartels from laundering money through local businesses. Suddenly, nobody wants to buy your cakes because you’ve been branded a security threat.

This is essentially what is happening right now between Anthropic—one of the world’s leading Artificial Intelligence companies—and the United States Department of Defense (DoD).

The Dispute: Drawing a Red Line on “Kinetic Warfare”

On March 9, 2026, Anthropic, the San Francisco-based creators of the “Claude” AI models, filed a lawsuit against the Department of Defense. The core of the fight is about what AI should, and absolutely should not, be allowed to do. Anthropic was founded with a heavy emphasis on “AI safety,” meaning they build guardrails into their models to prevent them from causing severe harm.

The conflict began when defense contractors working with the Pentagon tried to use Anthropic’s models for tasks related to “kinetic warfare.” In military terms, “kinetic” means lethal force—things like helping select targets for bombings, directing autonomous drones to attack, or calculating kill chains. Anthropic pointed to their Acceptable Use Policy, which explicitly forbids using their tech for activities that have a high risk of causing death or severe physical harm. They agreed to help the DoD with things like logistics, data analysis, and intelligence synthesis, but they drew a hard line at direct involvement in lethal operations.

The DoD’s reaction was swift and severe. They utilized a specific legal provision—Section 805 of the Fiscal Year 2024 National Defense Authorization Act (NDAA)—to designate Anthropic’s products as a “supply chain risk.” This is a massive black mark for any company trying to do business with the government or large corporations. Anthropic argues this designation is retaliation for sticking to their ethical guns and is suing to have it overturned.

The Pentagon’s Perspective: Mission Criticality

To understand the DoD’s side, you have to look at the world through the lens of national security. The Pentagon is currently in a technological arms race, primarily with China. They believe that whoever integrates advanced AI into their military fastest will have a decisive advantage for decades. The DoD is under immense pressure to modernize, and they rely heavily on private tech companies to do it.

From the Pentagon’s viewpoint, a critical supplier refusing to support vital aspects of a military mission is a supply chain risk. If the military builds its infrastructure around a specific AI tool, and suddenly that tool is unavailable for a crucial combat operation because of company policy, lives could be lost, and missions could fail. The DoD argues they need reliable partners who won’t pull the rug out from under them when things get “kinetic.”

By using the Section 805 designation, the DoD is essentially flexing its muscles. They are signaling to the entire tech industry that if you want the lucrative contracts that come with government work, you cannot pick and choose which parts of national defense you support. They are using the legal tools available to them to mitigate what they see as a vulnerability in their technological armor.

Anthropic’s Stance: Ethics Aren’t a “Risk”

Anthropic’s argument is rooted in both principle and administrative law. First, they argue that being an American company committed to safety is the opposite of a “risk.” They believe that preventing AI from autonomously making life-or-death decisions is actually good for humanity and long-term national stability. They are willing to support the US government, just not in ways that violate their core ethical charter against enabling lethal violence.

Legally, Anthropic argues the DoD’s action is “arbitrary and capricious.” This is legal speak meaning the government acted unreasonably, without proper justification, or out of retaliation. They claim the DoD is trying to bully them into changing their safety policies by threatening their business reputation.

Furthermore, Anthropic points out the absurdity of the tool the government used. The “supply chain risk” designation under this specific law is almost exclusively used to block technology from foreign adversaries—think banning Huawei 5G gear because of fears Chinese spies could use it. Anthropic is a US company headquartered in California. Applying a law meant to protect against foreign espionage to a domestic company over an ethics dispute is, in their view, a massive government overreach.

The “Foreign Adversary” Legal Hook

The most baffling part of this case is the specific legal mechanism the DoD chose. Section 805 of the FY2024 NDAA was designed to give the Pentagon faster ways to kick dangerous foreign technology out of its networks. It focuses on risks arising from goods or services linked to a “covered foreign country” or a “foreign adversary”—categories that generally mean China, Russia, Iran, and North Korea.

Using this statute against a domestic US company seems like trying to fit a square peg into a round hole. The law is meant to prevent foreign governments from sabotaging US equipment or stealing secrets through backdoors in software. There is zero allegation that Anthropic is a front for a foreign power or that their software is compromised by spies.

This looks less like a standard application of law and more like administrative creativity—weaponizing a statute meant for foreign threats against a domestic entity because it was the most convenient “big stick” available. Legal experts will likely argue that Congress never intended this law to be used to resolve ethical disputes between the Pentagon and American corporations. If the DoD can label any US company that disagrees with them a “foreign-style risk,” it grants them terrifyingly broad power over the private sector.

What Happens Next?

This lawsuit is just the beginning of what will likely be a long legal slog. In the short term, Anthropic will likely ask a judge for a “preliminary injunction.” This would temporarily pause the DoD’s designation while the actual lawsuit plays out, stopping the immediate bleeding of Anthropic’s reputation. The government will fight hard to keep the designation in place, arguing that courts should defer to the military on matters of national security.

The stakes extend far beyond Anthropic. This case will set a massive precedent for the entire AI industry. If the DoD wins, it sends a chilling message to other tech giants like Google, Microsoft, and OpenAI: If you build safety clauses into your AI that hinder military operations, the government will crush you. It could force tech companies to choose between their ethical charters and government contracts.

If Anthropic wins, it establishes that private companies have a right to draw ethical lines in the sand, even when dealing with the military, without facing retaliation disguised as national security measures. We can expect this to head toward a major court showdown over the next year, defining the relationship between Silicon Valley and the Pentagon for the AI era.